Tag: Database

-

Troubleshooting: reviewing ORDS connections, your application server, and response times

Symptom/Issue In an internal Slack thread today, a user was trying to diagnose browser latency while attempting to connect to the ORDS landing page. Peter suggested what I thought was a pretty neat heuristic for checking connections to ORDS and your database, as well as latency. Methodology Let’s say your symptoms are either slow loading…

Written by

-

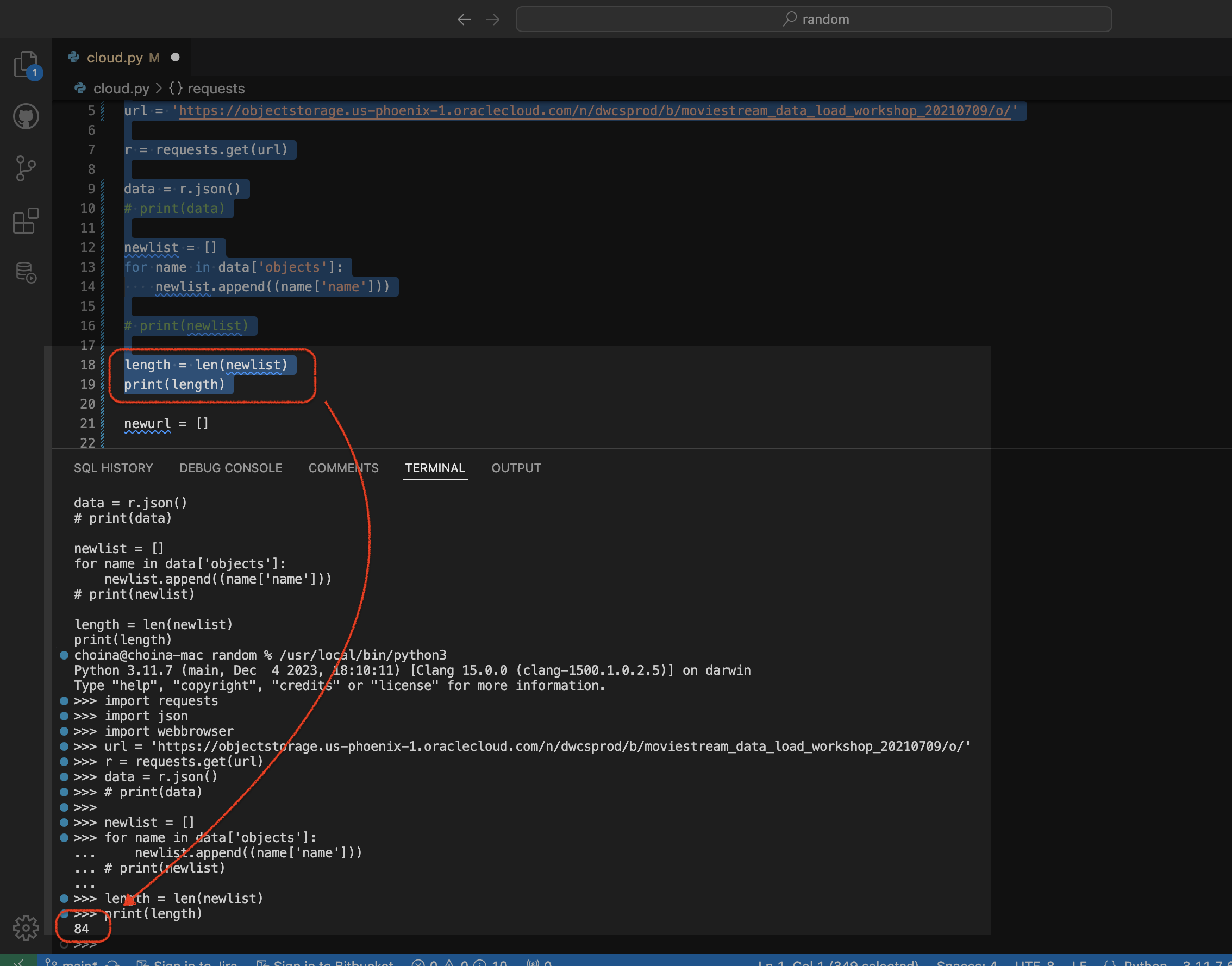

Python script to retrieve objects from Oracle Cloud Bucket

For…reasons, I needed a way to retrieve all the .CSV files in a regional bucket in Oracle Cloud Object Storage, located at this address: https://objectstorage.us-phoenix-1.oraclecloud.com/n/dwcsprod/b/moviestream_data_load_workshop_20210709/o You can visit it; we use it for one of our LiveLabs (this one), so I’m sure it will stay live for a while 😘. Once there, you’ll see all…

Written by

-

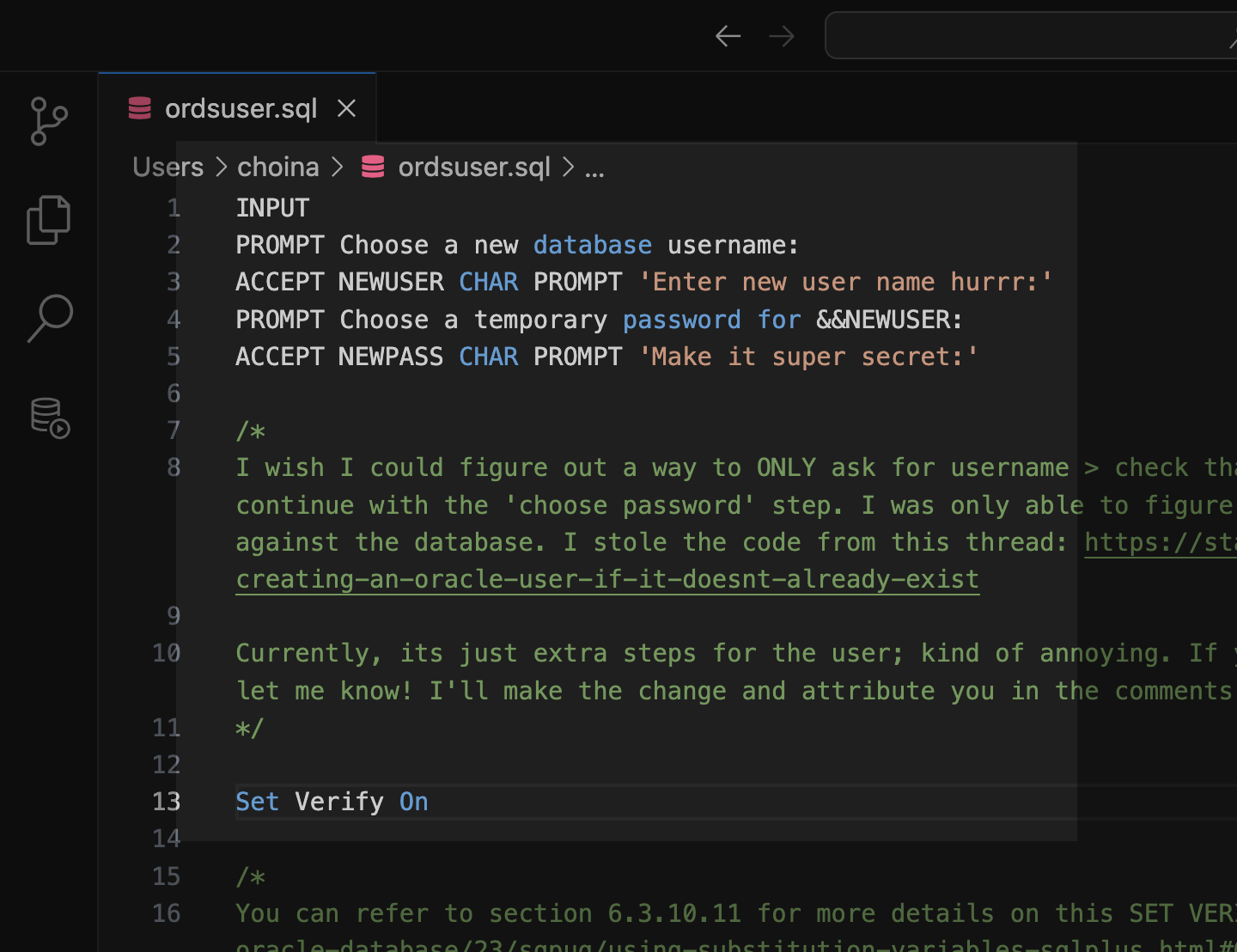

Tinkering: a SQL script for the ORDS_ADMIN.ENABLE_SCHEMA procedure

Post-ORDS installation Once you’ve installed ORDS, you need to REST-enable your schema before taking advantage of ORDS (I used to forget this step, but now it’s like second nature). RESOURCES: I’ve discussed ORDS installation here and here. I’d check both pages if you’re unfamiliar with it or want a refresher. ORDS.ENABLE_SCHEMA / ADMIN_ORDS.ENABLE_SCHEMA While logged into your…

Written by

-

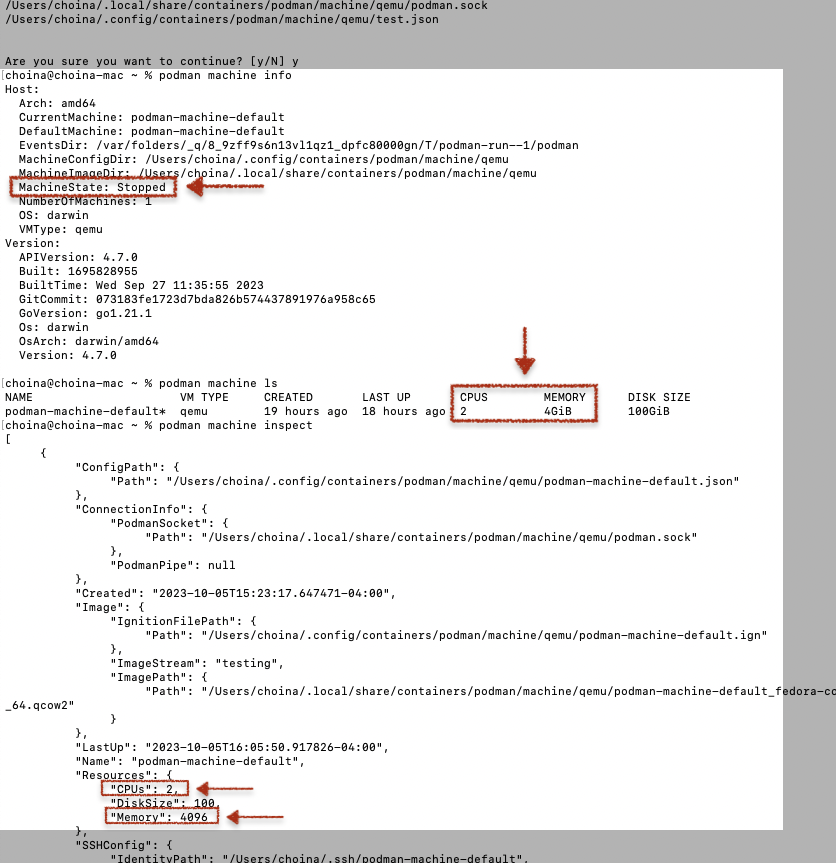

ORDS install considerations: choosing the correct host, port, service name, and pluggable database when the database is in a podman container

The other day, I wrote about how I had to start from scratch on my podman containers 😢. I’m now at the step where I need to reinstall ORDS in these two new database containers (21c and 23c). And since I’m doing this install yet again, I figured I would point out some things I’ve…

Written by

-

Podman container is unhealthy with Oracle database images

Problem Description You’re working with podman containers (maybe like me – the ones from the Oracle Container Registry), and when you execute the podman ps command, you see something like this in the standard output: In this case, I already had another container with an Oracle 21c database; that one was healthy. I previously wrote up a…

Written by

-

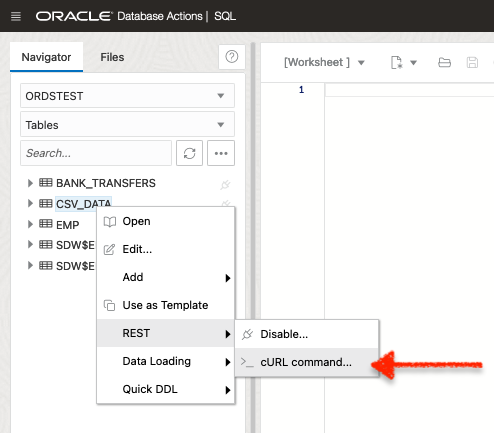

HELP!! parse error: Invalid numeric literal at line x, column x?! It’s not your Oracle REST API!!

A while back (yesterday), I penned a blog post highlighting the ORDS REST-Enabled SQL Service. And in that blog, I displayed the output of a cURL command. A cURL command I issued to an ORDS REST-Enabled SQL Service endpoint. Unfortunately, it was very messy and very unreadable. I mentioned that I would fix it later.…

Written by

-

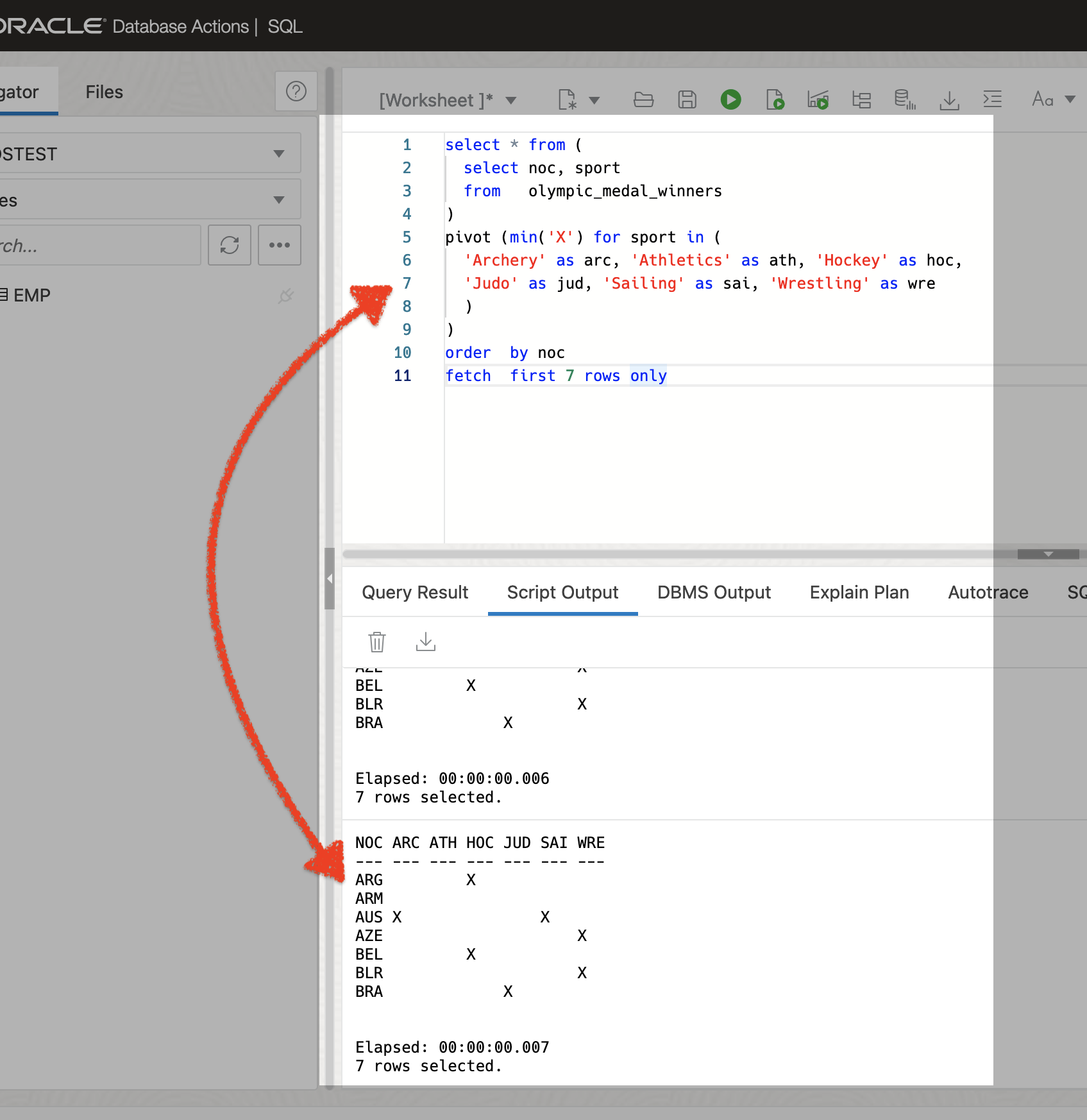

A quick ORDS REST-Enabled SQL Service example

I promise this post will connect back to an overarching theme. But for now, I want to show how you can take a SQL query and use that in combination with the ORDS REST-Enabled SQL Service to request data from a database table. The SQL query Here is the SQL query I’m using: The SQL…

Written by

-

User Guide: Oracle database in a Podman container, install ORDS locally, and access a SQL Worksheet on localhost

Summary The title says it all. I’ve run through this about ten times now. But I’ll show you how to start a Podman container (with a volume attached) and install ORDS on your local machine. And then, once installed, we’ll create and REST-enable a user so that the user can take full advantage of Oracle…

Written by

-

Oracle Database REST APIs and Apple Automator Folder Actions

The plan was to create an ORACLE REST endpoint and then POST a CSV file to that auto-REST enabled table (you can see how I did that here, in section two of my most recent article). But, instead of doing this manually, I wanted to automate this POST request using Apple’s Automator application… Me…two paragraphs…

Written by